In early March 2026, Amazon’s e-commerce website went down for several hours. Not for the first time in quick succession. According to reports, an internal document linked the incidents to AI-assisted development processes. Amazon’s response: a 90-day “Code Safety Reset” for several hundred critical systems. More manual – that is, human – control. Two-person sign-off before every deployment. Deliberate deceleration.

What exactly happened at Amazon in those March days may one day be sorted out by technology historians. Viewed from the outside, the episode is remarkable in its own right. One of the digital Big Four pushes hard on the accelerator in AI-assisted software development, starts to stumble, and pulls the emergency brake – back to lengthy control and approval procedures.

I spoke with Rainer Stropek about the case. Rainer is well known in the community as a developer, author, and conference speaker whose enthusiasm for new technologies tends to be contagious. He has been advising European companies on the use of AI in their development processes for over two years. He also actively shapes our VibeKode Conference as a member of its Advisory Board.

So how could it happen that AI led Amazon into a dead end? And what can we – in a European engineering culture oriented more toward evolutionary change than disruption – learn from it?

Frankenstein Systems – AI Meets What It Doesn’t Know

Rainer’s clients work with applications that are 10, 15, or 20 years old. These systems are successful. They have an enormous customer base and form the backbone of thriving businesses. And that very success is also their burden: it has produced a codebase that has grown together from different architectural principles, designed by successive generations of developers, built from multiple generations of library versions, shaped by decisions that were right at the time and that nobody fully understands today. Rainer calls these systems “Frankenstein systems” – patched together, grown organically, without inner consistency.

What happens when AI is applied to such systems? It claims with great confidence that it can solve the problems. It generates hundreds of lines of code. At first glance, what it produces looks like a solution. At second glance, nothing has changed – the generated code is effectively useless.

The reason is structural: AI works well with what it was trained on. It knows and understands consistent, modern, well-documented codebases. In Frankenstein systems, it doesn’t find those patterns. It cannot read between the lines. It cannot activate the implicit knowledge that an experienced developer has built up over years.

Things become particularly critical with cross-cutting concerns – changes that don’t affect a single isolated component but touch many different areas simultaneously. Precisely where enterprise systems most often need to be adapted, AI support breaks down dramatically. The result is massive manual rework. And the AI has not helped.

Sign Up for Our Newsletter

Stay Tuned & Learn more about VibeKode:

The IDE Trap – When the Infrastructure Blinds the AI

The second problem is less visible but equally fundamental. It doesn’t concern the codebase itself – it concerns the tooling landscape in which the codebase lives.

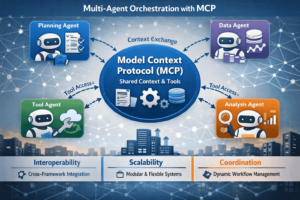

For AI to work autonomously and reliably, it needs an agentic loop: generate code, compile, run static analysis, execute unit tests, run integration tests – iteratively, autonomously, over and over again. Modern AI-friendly projects are designed for exactly this model from the start. All project operations can be controlled from the command line. The loop closes.

In legacy enterprise applications, this is structurally impossible.

Why? Because these systems have been tightly coupled to heavyweight IDEs – Visual Studio, IntelliJ – over many years. These IDEs were built to give developers a comfortable cockpit that hides much of the underlying complexity. A single click on “Build” triggers a large number of background processes that are completely invisible to the user, thanks to the IDE.

As helpful as IDEs are for human developers, they are deeply problematic for AI. An AI agent has no idea what Visual Studio is. It cannot click the button. It cannot access the build pipeline hidden behind the IDE’s interface. The agentic loop is structurally broken.

What remains: the AI generates code whose quality it cannot verify itself – because it cannot close the loop.

That distinction – whether all project operations are accessible from the command line or not – is what Rainer calls the central dividing line today. It’s not about the quality of the model. Not the experience of the team. It is the infrastructure.

His first piece of advice to every client: make sure every team member can build, run a linter, and start tests from the command line. This is not a nice-to-have modernization. It is the prerequisite without which AI integration fundamentally cannot work – regardless of which model is used.

In Rainer’s view, the classic IDE paradigm – as embodied by tools like Visual Studio, IntelliJ or Eclipse – is hitting a dead end. These environments were designed around a single developer working in deep focus on one task at a time. That was the right model for its era.

What teams actually need today is something different: lightweight environments where multiple projects can be open simultaneously, each running several parallel agents alongside terminal windows, browser views, and debuggers. The workflows are concurrent, not sequential. The unit of work is no longer a single developer in a single context.

Lightweight editors like VSCode are much closer to this model than their heavyweight counterparts. And the first larger steps in this direction are already visible: OpenAI Codex is one early signal from a major lab. Open-source projects like t3code are moving there too. Heavy IDEs may well have a future – but only if they are redesigned from the ground up around this new reality. The ones that don’t adapt will become bottlenecks.

The CLI, meanwhile, is experiencing its own renaissance – not as a replacement for IDEs, but as the underlying connective tissue that makes all of this automatable and accessible to agents in the first place.

The Prototype Trap – When Single Cases Distort Perception

The third area is not about technical infrastructure but about human perception – and about organizational decisions built on distorted perception.

Rainer describes a case, where an employee – not an engineer, but capable of vibe coding – spent two weeks building an internal application that completely upended a business workflow, generating savings of tens of millions of euros per quarter. The news echoed all the way to senior leadership.

What leadership sees: non-technical staff can now write software – software that is much closer to the business than anything before. The conclusion: we no longer need IT,let’s turn our product owners into developers.

What turned out to be the case: the software was running on the employee’s laptop. He didn’t understand why it was inactive at night. He didn’t know what client and server meant. When he shut down his computer and took it home, the application stopped running.

The prototype was valuable – Rainer is wants to be clear about this. As a proof of concept, as a demonstration of business potential: a dream! For solo solutions, small teams, applications on a local network without major security risks: excellent. The problem was not the tool. The problem was the conclusion.

What really matters: Everything enterprise engineers have internalized over years – Git branching strategies, merge conflicts, version management, database migrations, rollout and rollback strategies, the behavior of distributed systems during partial updates, testing in cloud environments.

What was missing int his example is an awareness of the complexity of the overall system. The instinct that at every point where you make a change, further dependencies are at play. That stability, security, and scalability don’t come from features – they come from architecture and process discipline.

What Can Be Done – and What Comes First

One response to all of this might be a kind of AI resignation – if the problems appear so much larger than the opportunities, why bother? But let’s focus instead on approaches that help overcome these dilemmas.

So I wanted to know from Rainer: what can be done? What follows are not ready-made recipes. But they are honest directions – and they align with what I hear again and again in conversations across the community as urgently needed.

Become AI-Ready First – Then Introduce AI

Concretely: API keys must not be sitting in files. Single sign-on. Multifactor authentication. Proper distributed authentication. Unit tests. Automated multi-stage deployment. None of this is new. It has been preached at developer conferences for 15 years. Those who have done it are benefiting now. Those who haven’t are facing a structural problem that AI does not solve – it makes it worse.

For systems that have grown under changing requirements over many years, this means gradual restructuring. Many of these applications have accumulated extensions and compromises and are now difficult to navigate. The approach is classical modularization: parts of the system are extracted and moved into clearly bounded, independently workable units – often along service boundaries familiar from the microservices world.

Rainer compares this to a construction project: the existing building stays standing while something new is built alongside it. Parts move over, others are replaced. Uncomfortable, but realistic.

The CLI as a Prerequisite, Not an Option

CLI capability is the foundational prerequisite. Before thinking about model selection, prompt engineering, or agentic workflows, one question must be answered: can every team member build, run a linter, and start tests from the command line? If not, any AI integration is structurally constrained.

This is an infrastructure project and must be treated as one. And it is the prerequisite for everything that follows.

Prototyping Is Prototyping. Production Is Something Else.

The value of well-made prototypes is undisputed. Product owners who can check their ideas directly against the existing codebase – does this make sense? Are there inconsistencies? How complex is what we’re asking for? – gain an autonomy that previously required lengthy interviews with senior architects. A clickable prototype as a basis for discussion is worth more than any requirements document.

But the line must be clear: prototyping stays prototyping. Production is production. The bridge between the two – translating a prototype into stable, scalable, operable software – is the actual engineering task. It does not get easier just because the prototype was built faster thanks to AI.

Sign Up for Our Newsletter

Stay Tuned & Learn more about VibeKode:

What I Take Away From This Conversation

AI does not make classic engineering dispensable – on the contrary, it demands structure, clarity, and discipline to the highest degree. Amazon is currently finding that out the hard way. The good news: you don’t have to.

What Rainer describes makes sense to me: the best results consistently emerge where people with genuine curiosity about AI meet experienced people who truly understand enterprise complexity. Where one explores fearlessly and the other knows what the dependencies are and what is at stake. When these two groups work together, what emerges – in Rainer’s words – are diamonds.

The speed that AI promises will come. The question companies need to ask themselves is: do we have the structures to support it? That is one of the central questions at the VibeKode Conference. And it is the question I recommend everyone ask themselves honestly – before the first deployment goes wrong.

AI makes dramatic acceleration possible. Engineering decides whether that speed holds.

Author

🔍 Frequently Asked Questions (FAQ)

1. What is the main risk of using AI in software development?

AI introduces significant acceleration in code generation, but when applied only locally, it can destabilize the overall system. The core issue is that local optimization does not equal system optimization. Without structural alignment, AI-generated changes can introduce inconsistencies and hidden failures.

2. Why did AI-assisted development cause issues in enterprise systems like Amazon?

Reported incidents suggest that AI-assisted development contributed to system instability, leading to outages and a “Code Safety Reset.” The response included stricter manual controls and deployment reviews. This highlights that rapid AI adoption without safeguards can increase operational risk.

3. What are “Frankenstein systems” in software engineering?

“Frankenstein systems” are legacy applications that evolved over many years with inconsistent architecture, mixed technologies, and undocumented decisions. These systems lack internal coherence, making them difficult for AI to interpret or modify effectively.

4. Why does AI struggle with legacy enterprise codebases?

AI performs best on structured, modern, and well-documented codebases. In legacy systems, implicit knowledge and inconsistent patterns prevent AI from generating meaningful solutions. As a result, generated code often requires extensive manual correction.

5. What is the “agentic loop” and why is it important for AI coding?

The agentic loop refers to an automated cycle where AI generates code, compiles it, runs tests, and iterates independently. This loop is essential for reliable AI-driven development. Without it, AI cannot validate its own output.

6. Why do traditional IDE-based systems limit AI effectiveness?

Legacy systems often rely on IDEs like Visual Studio or IntelliJ, where critical processes are hidden behind graphical interfaces. AI agents cannot interact with these interfaces, breaking the automation loop. This prevents AI from fully integrating into the development workflow.

7. Why is command-line accessibility critical for AI integration?

Command-line interfaces (CLI) allow all build, test, and deployment steps to be executed programmatically. This is a prerequisite for enabling AI-driven automation and agentic workflows. Without CLI access, AI integration remains structurally limited.

8. What is the “prototype trap” in AI-driven development?

The prototype trap occurs when successful AI-generated prototypes lead organizations to underestimate the complexity of production systems. While prototypes demonstrate value, they often lack scalability, security, and operational robustness. Misinterpreting them can lead to flawed strategic decisions.

9. What does it mean to become “AI-ready” in software engineering?

Being AI-ready involves establishing strong engineering foundations such as secure authentication, automated testing, modular architecture, and CI/CD pipelines. These practices enable AI to operate effectively within a system. Without them, AI amplifies existing weaknesses instead of solving them.

10. How should teams balance AI speed with engineering discipline?

AI can significantly accelerate development, but stability, security, and scalability still depend on disciplined engineering practices. The most effective teams combine AI experimentation with deep system understanding. This balance ensures that speed does not compromise system integrity.